The Neural Prism 3157080190 Apex Node integrates memory, compute, and communication layers into a modular unit for neural workloads. It supports on-device inference for real-time analytics while maintaining governance and auditable outcomes. The approach emphasizes lightweight architectures, iterative pruning, and scalable deployment across on-device, edge, and centralized layers. Implementation details and deployment milestones will reveal how resource distribution and privacy-preserving decisions are managed in practice, inviting closer consideration of its operational implications.

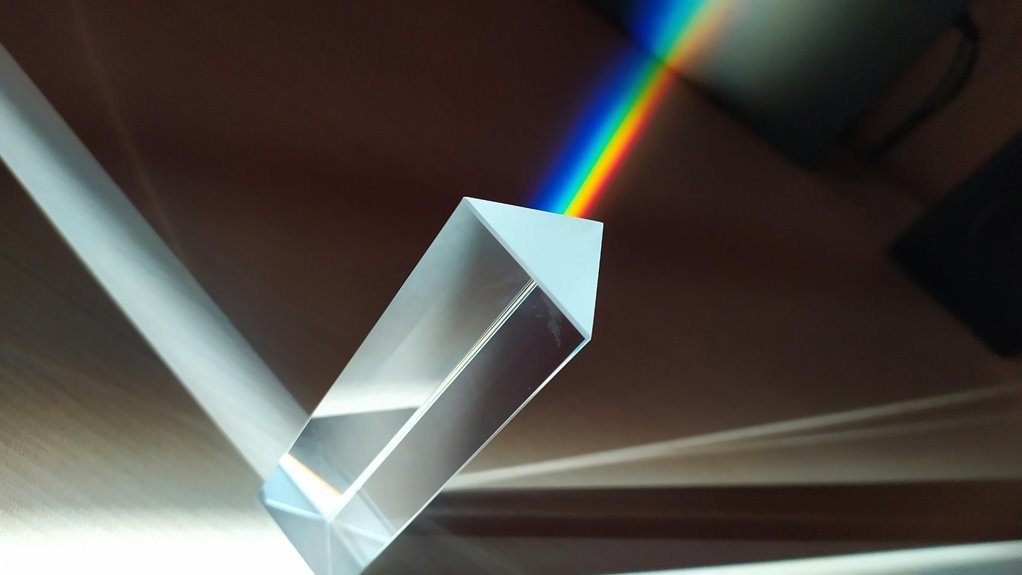

What Is the Neural Prism 3157080190 Apex Node?

The Neural Prism 3157080190 Apex Node is a modular computing unit designed to process neural network workloads with high efficiency. It enables scalable, component-based deployment for flexible architectures. The system centers on a neural prism approach, integrating memory, compute, and communication strata. As an apex node, it coordinates resource distribution, analytics readiness, and adaptable performance profiles.

How On-Device Inference Powers Real-Time Analytics?

On-device inference enables real-time analytics by executing neural network computations directly where data is produced, eliminating round-trips to centralized servers. This approach reduces latency and preserves bandwidth, empowering adaptive decision-making at the source.

Pending deployment considerations include hardware constraints and model compression.

Edge optimizations focus on lightweight architectures, efficient memory use, and parallel processing to sustain responsive insights without compromising autonomy.

Deployment Roadmap: From Data Orchestration to Pruning

The roadmap emphasizes data orchestration as foundation, iterative pruning strategy adoption, and rigorous validation, ensuring scalable deployment, efficient performance, and autonomy for edge environments.

Use Cases and Best Practices Across Industries

Across industries, use cases for Neural Prism 3157080190 Apex Node center on real-time, privacy-preserving inference at the edge, enabling rapid decision-making with limited connectivity and labeled data constraints.

The emphasis spans healthcare, manufacturing, and energy, prioritizing robust data governance and vigilant monitoring for model drift, ensuring compliant, auditable outcomes while maintaining resilience, interoperability, and scalable deployment across heterogeneous environments.

Conclusion

The Neural Prism 3157080190 Apex Node consolidates memory, compute, and communication into a scalable, audit-ready platform for neural workloads. It enables on-device inference with low latency and strong privacy, while orchestrating resources across edge and cloud layers. Through iterative pruning and lightweight architectures, it supports autonomous decision-making and governance. In essence, it acts as a bridge—like a conductor guiding a symphony of intelligent components—toward real-time analytics with auditable, resilient outcomes.