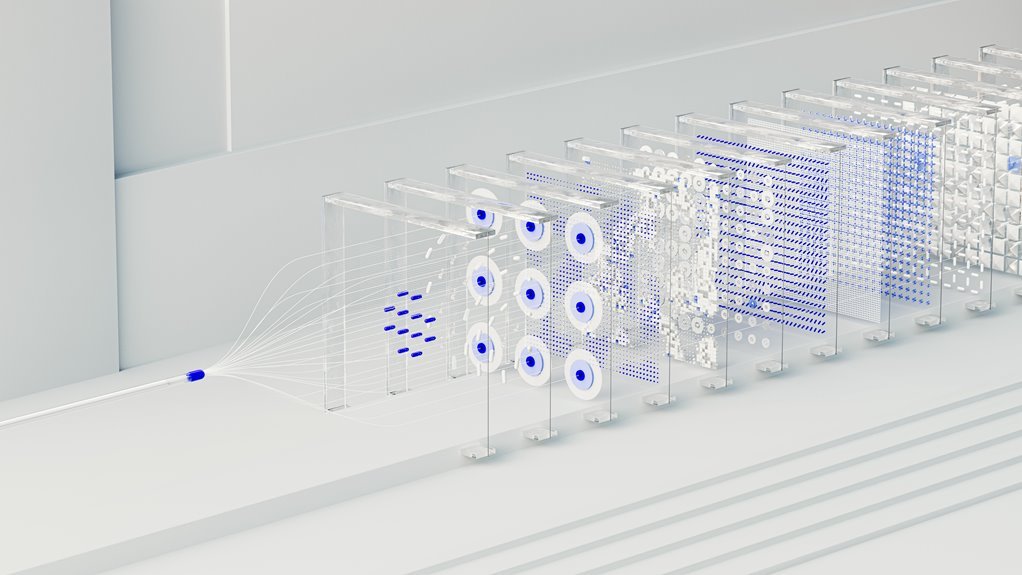

The Neural Flow 3202560223 Apex Node aggregates edge compute for distributed inference with deterministic cross-node scheduling. It emphasizes modularity, scalable orchestration, and lightweight messaging to balance latency, governance, and data sovereignty. The architecture supports local inference, edge caching, and secure deployments, suitable for manufacturing, autonomy, and health contexts. Its real value lies in coordinated task offloading and repeatable outcomes, yet key questions remain about deployment complexity and security guarantees as systems scale.

How the Neural Flow Apex Node Works at the Edge

The Neural Flow Apex Node operates at the edge by distributing computation across local devices, reducing latency and bandwidth consumption while maintaining model fidelity. It implements coordinated task offloading, local inference, and synchronized updates to preserve accuracy. Edge optimization strategies minimize data movement, while latency benchmarks guide partitioning, caching, and resource allocation, ensuring predictable performance across heterogeneous devices and network conditions.

Key Architecture and Core Capabilities

Key Architecture and Core Capabilities define how the Neural Flow Apex Node orchestrates distributed computation. The design emphasizes modularity, scalable scheduling, and deterministic execution, enabling consistent cross-node coordination. Core capabilities include heterogeneous resource orchestration, adaptive parallelism, and lightweight messaging. Strategic focus on edge latency and model optimization ensures efficient inference, fault tolerance, and reproducible performance across diverse edge environments.

Real-World Use Cases for Low-Latency AI

Real-world applications of low-latency AI span manufacturing, autonomous systems, and digital health, where immediacy of inference directly impacts safety, efficiency, and decision quality.

This analysis highlights edge latency as a critical constraint and emphasizes targeted model optimization to meet stringent timing requirements, enabling responsive control, rapid anomaly detection, and reliable real-time decision support across high-stakes environments.

Deployment Practices, Security, and Scalability

Deployment practices for Neural Flow 3202560223 Apex Node center on secure, scalable, and repeatable workflows that align model capabilities with operational demands. The analysis examines deployment architectures, security controls, and scalability strategies. It emphasizes edge caching to reduce latency, and data sovereignty considerations for compliance. Detachment preserves objectivity while evaluating risk, governance, and performance tradeoffs for freedom-minded organizations.

Conclusion

The Neural Flow 3202560223 Apex Node operates as a disciplined conductor, orchestrating disparate devices into a cohesive inference orchestra at the edge. Its modular scheduling, deterministic cross-node execution, and lightweight messaging create a predictable, low-latency landscape where data sovereignty and secure deployments are the baseline, not the aspiration. Real-time AI performance emerges from disciplined partitioning and synchronized updates, translating architectural rigor into tangible gains for manufacturing, autonomous systems, and digital health where precision matters most.